The Shift from Standalone AI Models to Enterprise AI Systems

Artificial intelligence in 2026 is no longer defined by what a single model can do. Across latest research spanning artificial intelligence, cloud computing, IoT, education, finance, and digital governance, a consistent pattern has emerged real enterprise value now depends on how AI is designed, governed, and integrated as a system, not on isolated algorithms or pilot projects.

Broadly, today’s AI landscape can be grouped into three major model categories, each representing a distinct trade-off between capability, cost, control, and scalability.

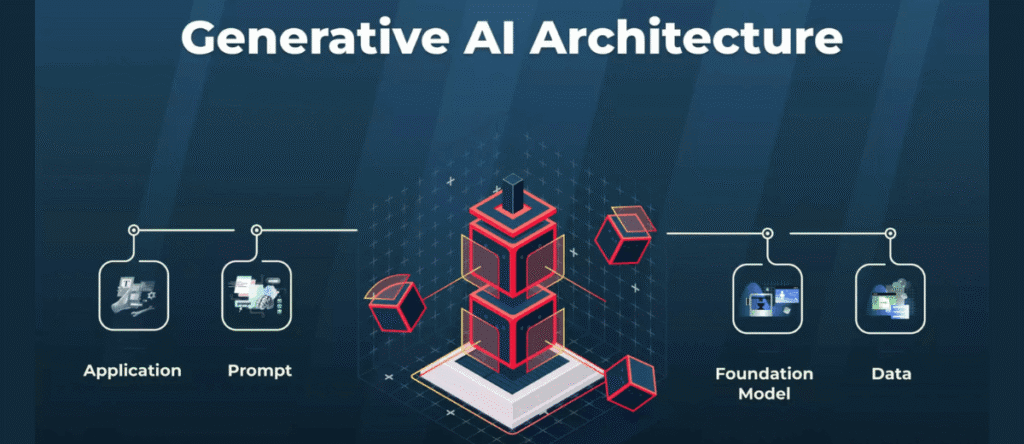

1. Generative Foundation Models

Generative Artificial Intelligence (Generative AI) refers to a class of AI systems that are capable of creating new content rather than merely classifying or predicting existing data. Unlike earlier AI systems focused on categorization and inference, generative AI systems can produce human-like text, images, code, audio, and other complex outputs.

Foundation models are defined as large, pre-trained neural networks trained on massive, heterogeneous datasets, designed to learn generalized representations that can be adapted across a wide range of downstream tasks. These models support zero-shot and few-shot learning through fine-tuning or prompt engineering and serve as the core infrastructure underlying modern generative AI systems (Narapareddy, 2025).

Generative foundation models (e.g., GPT-5, Gemini 3) sit at the top of the capability spectrum. These large, general-purpose models deliver broad task coverage with minimal setup, making them ideal for experimentation and horizontal use cases.

Pros of Generative AI Foundation Models

- Scalable productivity across knowledge workflows: Generative AI enables enterprises to automate and accelerate high-value knowledge work such as document drafting, software development, customer support responses, and internal knowledge retrieval.

- Faster deployment with minimal task-specific training: Foundation models can support zero-shot and few-shot learning, enabling enterprises to roll out new AI use cases without extensive retraining or data labeling. This lowers deployment friction and allows business teams to experiment and scale use cases more rapidly

- Improved decision support and knowledge accessibility: By summarizing large document sets, answering natural-language queries, and synthesizing insights across internal data, generative AI improves access to institutional knowledge. This enhances decision speed and consistency, particularly in complex environments such as finance, legal, healthcare, and operations

- Automation of complex, end-to-end workflows: Previous enterprise use cases where generative AI automates not just single tasks but entire workflows – such as code generation plus testing, clinical documentation plus compliance formatting, or customer support triage plus response drafting, unlocking system-level efficiency gain

Cons of Generative AI Foundation Models

- High and unpredictable cost structure: Foundation models require substantial compute resources for training and inference, leading to high operational costs and budgeting uncertainty. For enterprises, this translates into difficulty forecasting AI ROI and risk of cost overruns as usage scales

- Reliability risks in business-critical use cases: Factual inaccuracies (hallucination) is a key limitation of generative AI. In enterprise environments such as legal advice, financial reporting, or medical documentation, these errors pose material operational and reputation risks if not mitigated through controls and human oversight

- Governance, compliance, and regulatory exposure: Because foundation models are trained on large, opaque datasets, enterprises face challenges around data provenance, audit ability, and regulatory compliance. These issues can slow deployment in regulated industries and increase legal exposure if governance frameworks are not established

- Limited transparency and explainability: The black-box nature of foundation models makes it difficult for enterprises to explain decisions, trace errors, or certify systems for regulated environments. This limits adoption in areas where explainability and accountability are mandatory

- Organizational readiness and skill gaps: Successful enterprise adoption requires new capabilities in AI governance, prompt design, system integration, and human-in-the-loop workflows. Without organizational alignment and leadership oversight, enterprises struggle to move from experimentation to sustained value

2. Large Language Models (LLMs) & Domain-Specific Language Models.

Large Language Models (LLMs) – Applied Definition

Large Language Models (LLMs) are large, pre-trained neural networks capable of understanding and generating human-like language, which can be adapted to specialized domains through fine-tuning and optimization strategies rather than being trained from scratch. Their value lies in serving as a general foundation that can be incrementally adapted to domain-specific enterprise needs (Lu et al., 2025).

Domain-Specific / Fine-Tuned LLMs – Applied Definition

Domain-Specific Language Models (DSLMs) are AI language models that have been undergone continued pre-training (CPT), supervised fine-tuning (SFT) on data, terminology, and task contexts unique to a particular industry or subject area, enabling them to understand and generate content with higher accuracy, relevance, and contextual alignment than general-purpose models when applied to specialized tasks. These models incorporate domain knowledge into their representations so that they can handle specialized vocabulary, reasoning patterns, and task structures that general models often miss or misinterpret (Fasoo, 2026).

Pros of Large Language Models

- Cost-effective specialization without retraining from scratch: Enterprises can adapt existing foundation models through continued pre-training (CPT), supervised fine-tuning (SFT) and preference optimization instead of training new models end-to-end. This significantly reduces capital expenditure, time-to-deployment, and infrastructure requirements, making advanced AI capabilities more accessible to organization

- Improved accuracy in domain-specific enterprise tasks: Experimental results show that fine-tuned and merged models achieve substantially higher accuracy in specialized evaluations compared to base models. For enterprises, this translates into more reliable AI outputs in technical, scientific, or operational contexts where generic models underperform.

- Reusable AI assets across multiple business use cases: Once a model is adapted, it can support multiple downstream tasks (reasoning, summarization, structured output, multi-turn dialogue)

Enables automation of complex, knowledge-intensive workflows: Fine-tuned models can generate structured outputs, multi-step reasoning, and domain-specific synthesis, supporting automation beyond simple chat or Q&A. This aligns with enterprise goals of workflow automation rather than isolated AI assistance

Cons of Large Language Models

- High operational complexity and expertise requirements: While cheaper than training from scratch, fine-tuning and model merging require specialized ML expertise, careful benchmarking, and iterative experimentation. The paper notes that outcomes vary significantly depending on strategy choice, making large-scale enterprise deployment non-trivial

- Sensitivity to data quality: Performance degrades when training data quality declines, even if dataset size increases. For enterprises, this implies that poorly curated internal data can reduce AI effectiveness, increasing governance and data-engineering costs

- Scaling limits for smaller or cost-constrained models: Emergent capabilities from model merging do not appear in very small models (e.g., 1.7B parameters). This limits the effectiveness of aggressive cost-cutting strategies and suggests a minimum viable scale for enterprise-grade performance

- Reduced transparency and explainability: Although performance improves, the paper implicitly highlights that merged and heavily fine-tuned models become more complex and less interpretable, complicating auditing, debugging, and regulatory compliance—key concerns for enterprises in regulated industries

- Diminishing returns without systematic evaluation: Not all fine-tuning or merging strategies improve performance; some combinations reduce accuracy relative to baseline models. This introduces enterprise risk if AI programs scale without rigorous evaluation, benchmarking, and governance controls.

3. Small Language Models (SLMs)

SLMs are Language Models optimized (via pruning, quantization, and efficient inference pipelines) to run on local hardware with low latency and acceptable task performance. Properly optimized SLMs can perform in-domain function-calling tasks on resource-constrained devices Khiabani, Y. S. (2025).

SLMs can be seen to enable on-device deployments (privacy by design, offline capability) and meaningfully lower recurring inference costs compared with cloud inference, making them attractive for privacy-sensitive edge applications (e.g., in-vehicle assistants, mobile apps).

Pros of Small Language Models

- Lower operational costs: By pruning, quantizing, and deploying SLMs locally, enterprises can avoid recurring cloud inference costs and reduce infrastructure complexity. The paper shows that significant parameter reduction (up to ~50%) still preserves acceptable performance, making SLMs cost-efficient for scaled deployment (Khiabani, Y. S. 2025)

- Improved data privacy and regulatory alignment: Because SLMs run on-device, sensitive user data (e.g., voice commands, behavioral inputs) does not need to be transmitted to external servers. This directly benefits enterprises operating under privacy, security, and data-sovereignty constraints

- Enables on-device AI with low latency and high reliability: Optimized SLMs can run entirely on local hardware (vehicle head units) and achieve real-time inference (up to 11 tokens/sec) without hardware acceleration. For enterprises, this supports low-latency user experiences, offline operation, and reduced dependency on cloud connectivity

- Simplifies integration of complex systems: Evidence shows that SLMs can act as intermediary agents between users and complex backend systems. This reduces engineering effort when adding or updating features, supporting faster product iteration and modular system

- Enables automation beyond rule-based systems: SLMs replace rigid rule-based logic with language-driven function-calling, allowing enterprises to automate complex, multi-step interactions more flexibly. The study reports higher function-calling accuracy (≈0.85–0.88) compared with traditional production systems (~0.75), indicating tangible performance gains (Khiabani, Y. S. 2025)

Cons of Small Language Models

- Performance trade-offs compared to large models: As SLM size decreases, benchmark scores decline, which limits their suitability for highly open-ended or knowledge-intensive enterprise tasks

- Significant optimization and tuning effort required: Deploying SLMs at enterprise quality requires pruning, healing, fine-tuning, and benchmarking, all of which demand specialized expertise and experimentation. This increases engineering complexity and operational overhead compared to using managed cloud models

- Sensitivity to aggressive model compression: Khiabani, Y. S. 2025 finds that removing more than ~30% of parameters leads to noticeable performance degradation. This places limits on cost-cutting strategies and requires enterprises to carefully balance efficiency against reliability

- Limited general-purpose capability: SLMs perform best when fine-tuned for narrow, well-defined tasks (e.g., in-vehicle function-calling). Their effectiveness drops when asked to handle broad, unstructured queries, reducing their applicability as universal enterprise assistants

- Ongoing maintenance and lifecycle management: Because SLMs are embedded into products, updates require model re-training, re-validation, and redeployment rather than simple API upgrades. This introduces long-term maintenance and versioning challenges for enterprises

4.Why Enterprises Move from “Using AI Models” to “Building AI Infrastructure”

One of the primary drivers is model fragility under change. In operational settings, data distributions, feature relevance, and user behavior evolve continuously, causing performance degradation through concept drift and feature obsolescence. The study demonstrates that even high-performing models can fail silently when these shifts are not detected and addressed in real time. As a result, enterprises are compelled to move beyond one-off deployment toward infrastructure that continuously monitors, adapts, and recalibrates AI behavior.

Closely related is the issue of scalability in AI operations. Traditional models approaches rely heavily on manual intervention for drift detection, retraining, and validation, which becomes untenable as AI systems scale across products, regions, and business units. (Reda et al., 2025) shows that infrastructure-level automation where detection, response, and retraining are embedded into system control loops dramatically reduces recovery time and operational burden, making large-scale enterprise adoption feasible.

This also highlights a fundamental shift in how enterprises define success. Rather than optimizing for accuracy alone, organizations increasingly require resilience, defined as the ability of AI systems to preserve accuracy, fairness, and reliability under continuous uncertainty. This reframing exposes the limits of model-centric thinking: resilience cannot be guaranteed by individual models, but only by systems that coordinate data pipelines, feature management, monitoring, governance, and human oversight as integrated infrastructure

.Governance and compliance further accelerate this transition. Fairness, explainability, and regulatory alignment are often handled as retrospective audits, leaving enterprises exposed to legal and reputation risk. In contrast, infrastructure-centric approaches embed explainability mechanisms, bias monitoring, and compliance checks directly into operational workflows, allowing organizations to respond proactively rather than reactively to governance failure.

Another critical motivation is feature volatility, where commonly used input signals degrade or become adversarially manipulated over time. The research demonstrates that managing feature reliability requires infrastructure capable of detecting instability and dynamically substituting or reweighting features, capabilities that cannot be implemented at the level of individual models alone

Underlying all these factors is the concept of trust continuity. As AI systems increasingly influence high-stakes decisions, enterprises must ensure that stakeholders, customers, regulators, and internal teams—can trust AI behavior over time. The paper argues that trust is not a static property of a model, but an emergent outcome of infrastructure that integrates transparency, monitoring, and structured human oversight throughout the AI lifecycle

Taken together, it is clear that enterprises are not abandoning AI models; rather, they are recontextualising them as components within a broader system. As AI becomes mission-critical, organizations are adopting an infrastructure mindset, treating AI much like cloud or security platforms: continuously adaptive, governed by design, and resilient to change. This shift marks a decisive move from experimenting with AI to operationalizing it as core enterprise infrastructure

WHY IT MATTERS

The shift from using AI models to building AI systems is not a technical nuance; it is a strategic inflection point that directly determines whether AI delivers durable enterprise value or remains a costly experiment.

First, enterprise value no longer comes from model capability alone. As the research across foundation models, domain-specific LLMs, and SLMs consistently shows, even highly capable models degrade under real-world conditions: data drifts, features decay, regulations evolve, and usage scales unpredictably. Organizations that treat AI as a deploy – once artifact expose themselves to silent failures, compliance risk, and escalating operational costs. In contrast, enterprises that invest in AI as infrastructure integrating monitoring, governance, retraining, and human oversight are able to sustain performance as conditions change.

Second, cost efficiency is now a systems problem, not a model choice. Foundation models offer speed and breadth, but without orchestration, guardrails, and workload routing, they introduce volatile costs and governance exposure. Domain-specific LLMs improve accuracy and ROI, but only when supported by rigorous evaluation pipelines and lifecycle management. SLMs dramatically reduce inference costs and enable privacy-by-design deployments, but demand disciplined optimization and update processes. The research makes clear that cost control emerges from architectural decisions, hybrid model stacks, task routing, and continuous evaluation, not from selecting “the best” model in isolation.

Third, trust, compliance, and resilience have become core performance metrics. Enterprises operating in finance, healthcare, education, and public-sector environments cannot rely on retrospective audits or manual checks. The cited studies demonstrate that fairness, explainability, and reliability must be embedded directly into AI operations. This requires infrastructure that continuously detects drift, audits behavior, enforces policy, and keeps humans in the loop. Trust, in this context, is not a feature of any single model, it is an emergent property of the system surrounding it.

Finally, AI maturity is now measured by organizational readiness, not experimentation velocity. Many enterprises can deploy pilots quickly; few can operate AI reliably at scale. The research highlights that sustained value depends on governance frameworks, data quality discipline, cross-functional ownership, and clear accountability. Organizations that internalize AI as core infrastructure, similar to cloud or cybersecurity – are better positioned to scale responsibly, adapt faster, and protect long-term value.

The competitive advantage of AI in 2026 comes from systems thinking. Models remain essential, but only as components within resilient, governed, and continuously adaptive enterprise AI platforms.